Table of Contents

Bayes Theorem in Machine Learning

As we know well, Machine Learning is the happening trend in AI. The 21st century is entirely driven by new technology and devices, some of which have not yet been fully utilized and others of which have not yet reached their full potential. Similar to that, machine learning is a technology that is still in the early stages of development. Many ideas, including supervised learning, unsupervised learning, reinforcement learning, perceptron models, neural networks, etc., make machine learning a superior technology. We will cover Bayes Theorem, another crucial machine learning theorem, in this article titled “Bayes Theorem in Machine Learning.” Join SLA for the Best Machine Learning Course in Chennai with IBM Certification.

2What is Bayes Theorem

The English mathematician Thomas Bayes, who made significant contributions to decision theory, a branch of mathematics that deals with probability, is the name-bearer of the Bayes Theorem. The Bayes Theorem is a popular tool in machine learning as well since it makes class predictions precise and accurate. Machine learning applications that involve categorization tasks employ the Bayesian method of computing conditional probabilities.

The Naive Bayes Classification, a condensed form of the Bayes Theorem, is employed to speed up calculation and lower expenses. These ideas are covered in this article, along with the uses of the Bayes Theorem in machine learning.

Get comprehensive knowledge about Regularization in Machine Learning by reading our recent article along with python implementation practices.

3Why Should We Use Bayes Theorem in Machine Learning?

A technique for figuring out conditional probabilities, or the likelihood of one event happening given that another has already happened, is the Bayes Theorem. A conditional probability might result in more precise conclusions since it takes into account more conditions, or more data.

As a result, conditional probabilities are essential for computing precise probabilities and predictions in machine learning. It is critical to comprehend how algorithms and techniques like the Bayes Theorem are used in machine learning given that the subject is becoming more and more prevalent across a range of domains.

4Implementing Bayes Theorem in Machine Learning

Product rule and conditional probability of event X with known event Y can be used to derive Bayes’ theorem:

The following is how we can represent the probability of event X with known event Y according to the product rule:

P(X ? Y)= P(X|Y) P(Y) {equation 1}

In addition, the likelihood of occurrence Y given knowing event X

P(X ? Y)= P(Y|X) P(X) {equation 2}

Combining the two equations on the right-hand side will result in the mathematical expression of the Bayes theorem. We’ll receive:

Here, both events X and Y are independent events, which indicates that the likelihood that either will occur is independent of the other.

The Bayes Rule or Bayes Theorem is the name given to the equation above.

We need to determine the posterior, or P(X|Y). It is defined as a revised probability that incorporates the information at hand. We refer to P(Y|X) as the likelihood. It is the likelihood that the theory will be supported by evidence.

Prior probability, or probability of a hypothesis before taking into account the evidence, is denoted by P(X).

The marginal probability is denoted by P(Y). It is defined as the probability of the evidence taken into account.

Consequently, the Bayes Theorem is expressed as:

Now, posterior = likelihood * prior / evidence

We must comprehend a few key ideas before we can fully appreciate the Bayes theorem. These are listed below:

4.1Experiment

An experiment is defined as a planned action performed under predetermined conditions, such as rolling the dice, tossing a coin, etc.

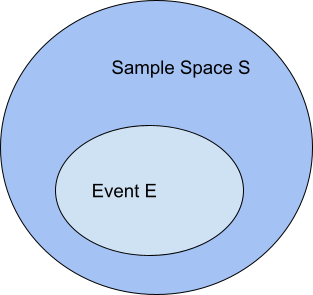

4.2Sample Space

The results of an experiment are referred to as possible outcomes, and the set of all possible outcomes of an event is referred to as the sample space. For instance, the sample space for a dice roll would be

S1 = {1, 2, 3, 4, 5, 6}.

Similar to how our sample space will be S2 = “Head, Tail” if our experiment involves tossing a coin and tracking the results.

4.3Event

In an experiment, an event is a subset of the sample space. It is also known as a set of results.

Assume there are two events, A and B, in our experiment with rolling the dice.

Assume there are two events, A and B, in our experiment with rolling the dice.

Event A = 2, 4, 6 when an even number is acquired

Event B = Occurs when a number exceeds 4 and results in 5, 6.

The likelihood of the event A is calculated as follows:

P(A)=Number of favorable outcomes /Number of potential outcomes

P(E) = 3/6 =1/2 =0.5

The probability of the event B, or “P(B),” is the ratio of the number of favourable outcomes to the total number of possible outcomes, which is

= 2/6

=1/3

= 0.333

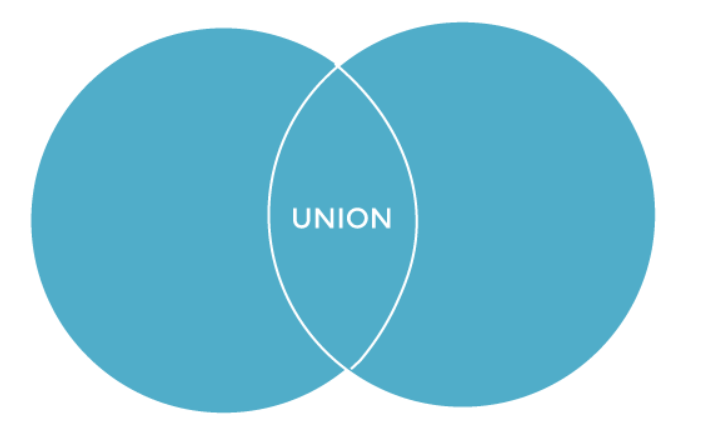

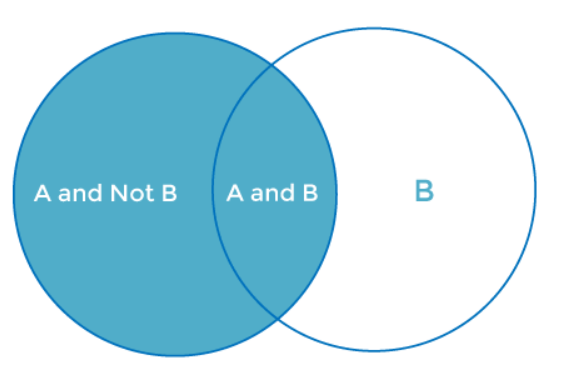

4.4Union of event A and B:

A∪B = {2, 4, 5, 6}

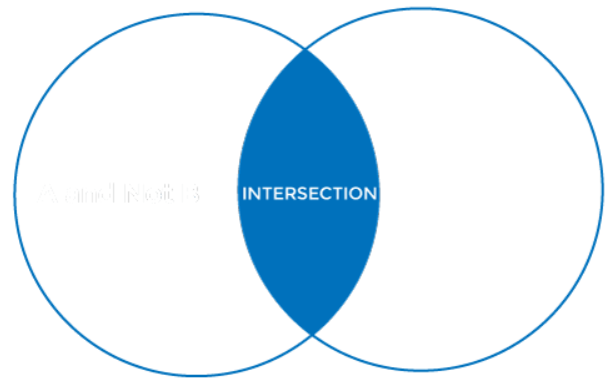

4.5Intersection of event A and B

A∩B= {6}

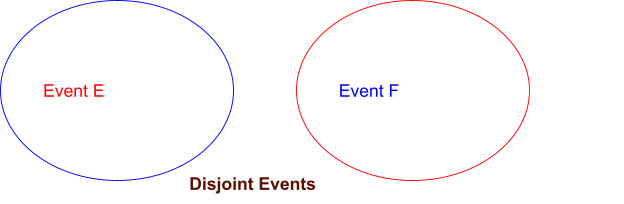

Disjoint Event: Events are referred to as disjoint events or mutually exclusive events if the intersection of the events A and B is an empty set or null.

4.6Random Variable

It is a real value function that facilitates in mapping the relationship between the sample space and the actual experiment line. A random variable is applied to a set of random values, each of which carries a certain probability. It operates like a function, which can be discrete, continuous, or a combination of both, rather than being random or a variable.

4.7Exhaustive Event

The exhaustive event of an experiment is, as its name implies, a series of events in which at least one event happens at a time.

Accordingly, two events A and B are said to be exhaustive if either A or B unquestionably occurs at the same moment and both are mutually exclusive, like in the case of tossing a coin: either it will be a Head or it could be a Tail.

4.8Independent Event

When the occurrence of one event has no bearing on the occurrence of the other, two occurrences are said to be independent. In plain English, we might say that neither event’s likelihood of success depends on the other. Two events A and B are said to be independent in mathematics if:P(A ∩ B) = P(AB) = P(A)*P(B)

4.9Conditional Probability

The likelihood of an occurrence A provided that another event B has already happened is known as conditional probability (i.e. A conditional B). P(A|B) symbolizes this, and we can define it as follows:

P(A|B) = P(A ∩ B) / P(B)

4.10Marginal Probability

The probability that an event A will occur without regard to any other events B is known as marginal probability. Additionally, it is taken into account when any evidence’s probability is taken into account.

P(A) = P(A|B)*P(B) + P(A|~B)*P(~B)

Here, “B” stands for the scenario in which “B” does not happen.

Read our article to know what Machine Learning Jobs and prepare according to the industry-expected machine learning skills.

5How to Implement Bayes Rule in Machine Learning?

We can calculate the single term P(B|A) in terms of P(A|B), P(B), and P thanks to the Bayes theorem (A). When we need to determine the fourth term and have a high probability of P(A|B), P(B), and P(A), this rule comes in very handy.

One of the simplest uses of the Bayes theorem in classification algorithms is the naive Bayes classifier, which separates data according to accuracy, speed, and classes. Let’s use the example below to better understand how the Bayes theorem is used in machine learning.

Consider a vector A that has I characteristics. It implies

A = A1, A2, A3, A4……………Ai

Additionally, the n classes are denoted by the letters C1, C2, C3, C4,… Cn.

These are the two conditions that are provided to us, and our classifier—which utilizes machine language—must forecast A. The first item that our classifier will select is the best class. Therefore, using the Bayes theorem, we can formulate it as follows:

P(Ci/A)= [ P(A/Ci) * P(Ci)] / P(A7)

Here;

The condition-independent entity is P(A).

P(A) will not vary in value as the class changes, i.e., it will remain constant throughout the class. The value of the term P(A/Ci) * P must be maximized in order to maximize the P(Ci/A) (Ci).

Let’s assume that each of the n classes on the probability list has an equal chance of being the correct response. It could be as follows:

P(C1)=P(C2)-P(C3)=P(C4)=…..=P(Cn).

We can cut down on both the cost and the time of computation thanks to this approach. This is how the Nave Bayes theorem has streamlined conditional probability tasks without compromising precision and how the Bayes theorem contributes significantly to machine learning. It is concluded as below at the end:

P(Ai/C)= P(A1/C)* P(A2/C)* P(A3/C)*……*P(An/C)

Therefore, we can simply explain the chances of smaller events by utilizing the Bayes theorem in machine learning.

6What is Naive Bayes Classifier in Machine Learning?

Another supervised technique that uses the Bayes theorem to handle classification issues is the naive Bayes theorem. It is one of the most straightforward and efficient machine learning classification methods, allowing us to create a variety of ML models for quick predictions. It makes predictions based on an object’s likelihood because it is a probabilistic classifier. Spam filtration, Sentimental analysis, and article classification are some examples of common Naive Bayes algorithms.

7Advantages of Naive Bayes in Machine Learning

- It is among the most straightforward and efficient approaches to the conditional probability and text classification problems.

- Where the assumption of independent predictors is true, a Naive-Bayes classifier algorithm is superior to all other models.

- Compared to other models, it is simpler to apply.

- The training time is reduced since just a little amount of training data is needed to predict the test data.

- Both binary and multi-class classifications can be done with it.

8Disadvantages of Naive Bayes in Machine Learning

The biggest drawback of employing Naive Bayes classifier algorithms is that it restricts the use of independent predictors because it implicitly implies that all characteristics are either unrelated or independent, however in reality it is not practical to obtain mutually independent attributes.

9Conclusion

Even if we live in a technological age when everything is based on a variety of novel, still-in-development technologies, these remain insufficient in the absence of classical theorems and algorithms that are already in existence. The Bayes theorem is another well-known machine learning example. There are numerous uses for the Bayes theorem in machine learning. It is one of the most chosen ways over all other algorithms in classification-related tasks. So, it is safe to claim that the Bayes theorem plays a significant role in machine learning. In this article, we have covered topics like the Bayes Theorem, Naive Bayes Classifier, and how to use it in machine learning.

We hope this article is useful for gaining the fundamental understanding of Bayes Theorem in Machine Learning. Get practical knowledge by implementing it in real-time projects by enrolling in our Machine Learning Training in Chennai at SLA.